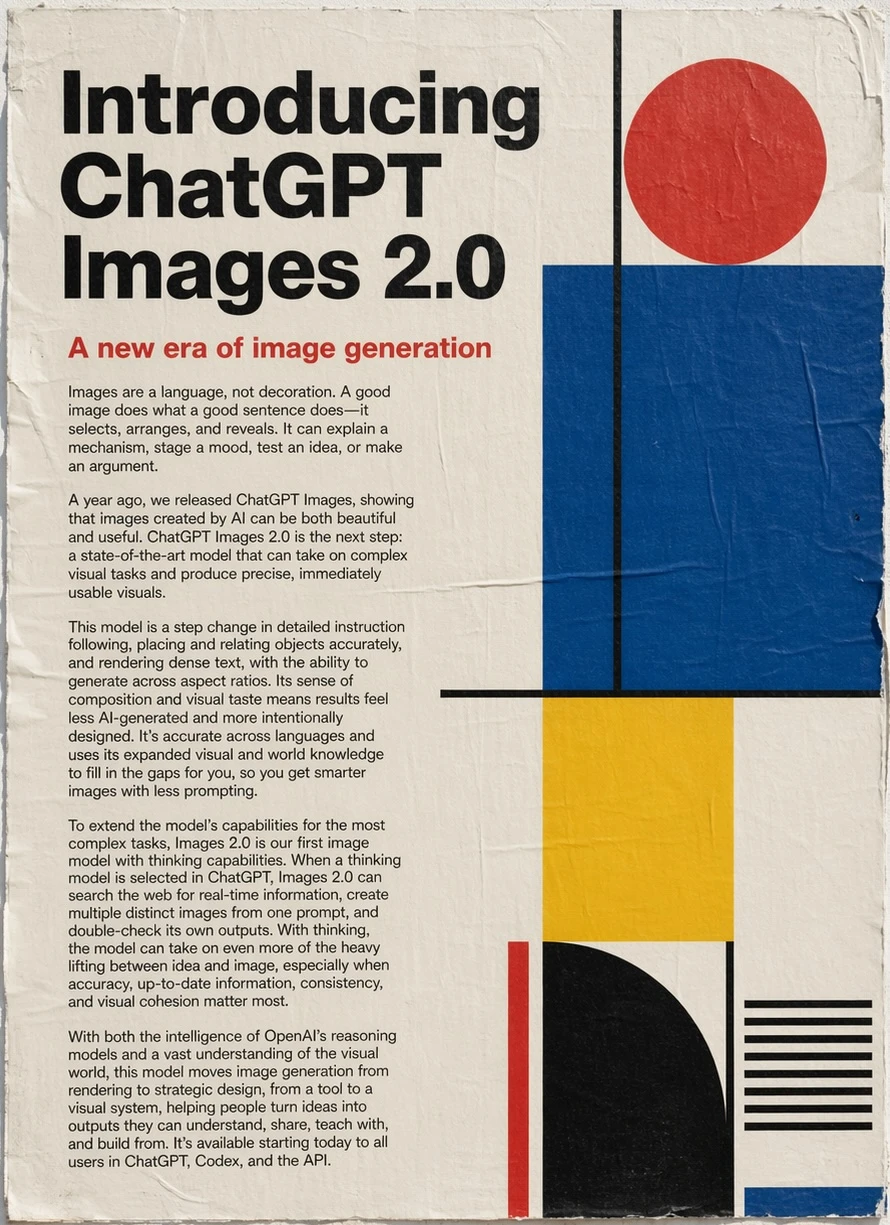

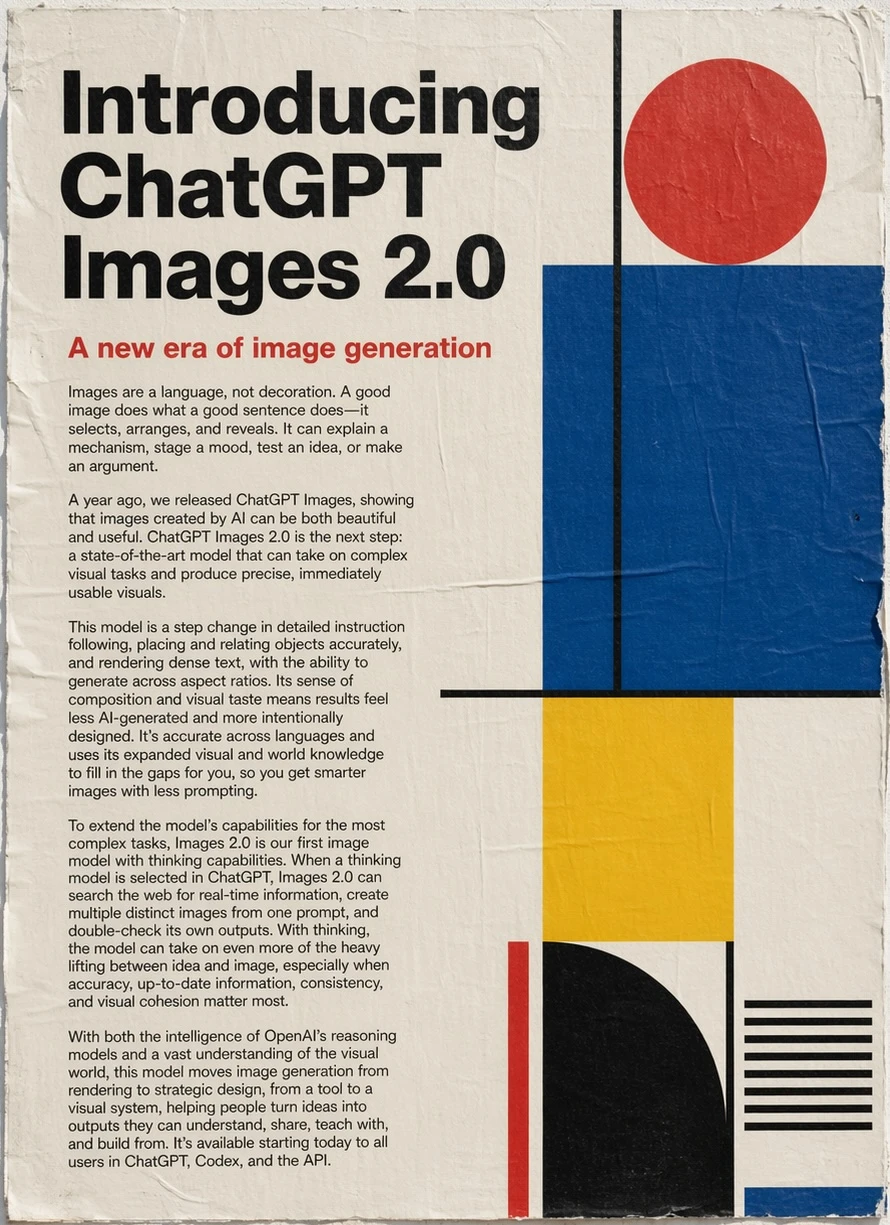

On April 21, 2026, OpenAI launched ChatGPT Images 2.0 — its most significant image generation upgrade to date. Available via the API as gpt-image-2, the model brings OpenAI's o-series reasoning architecture into visual creation for the first time: images that plan, verify, and self-correct before delivery.

There's also a hard deadline attached: DALL-E 2 and DALL-E 3 retire on May 12, 2026. Any existing integration must migrate before then.

What Is ChatGPT Images 2.0?

ChatGPT Images 2.0 is the direct successor to GPT Image 1.5. The core addition is native reasoning — the model analyzes prompts, plans composition, and checks its own output before returning a result. This is OpenAI's first image model to incorporate that kind of pre-generation deliberation.

It operates in two modes:

- Instant mode — available to all users, including the free tier. Core quality improvements over the previous generation.

- Thinking mode — restricted to Plus ($20/mo), Pro ($200/mo), Business, and Enterprise subscribers. Web search, multi-image batching, layout reasoning, and output verification.

On the Image Arena leaderboard, gpt-image-2 holds the number one position across every category, leading the previous top model by 242 points — the largest margin ever recorded on the benchmark.

Key Features

Reasoning-First Image Generation

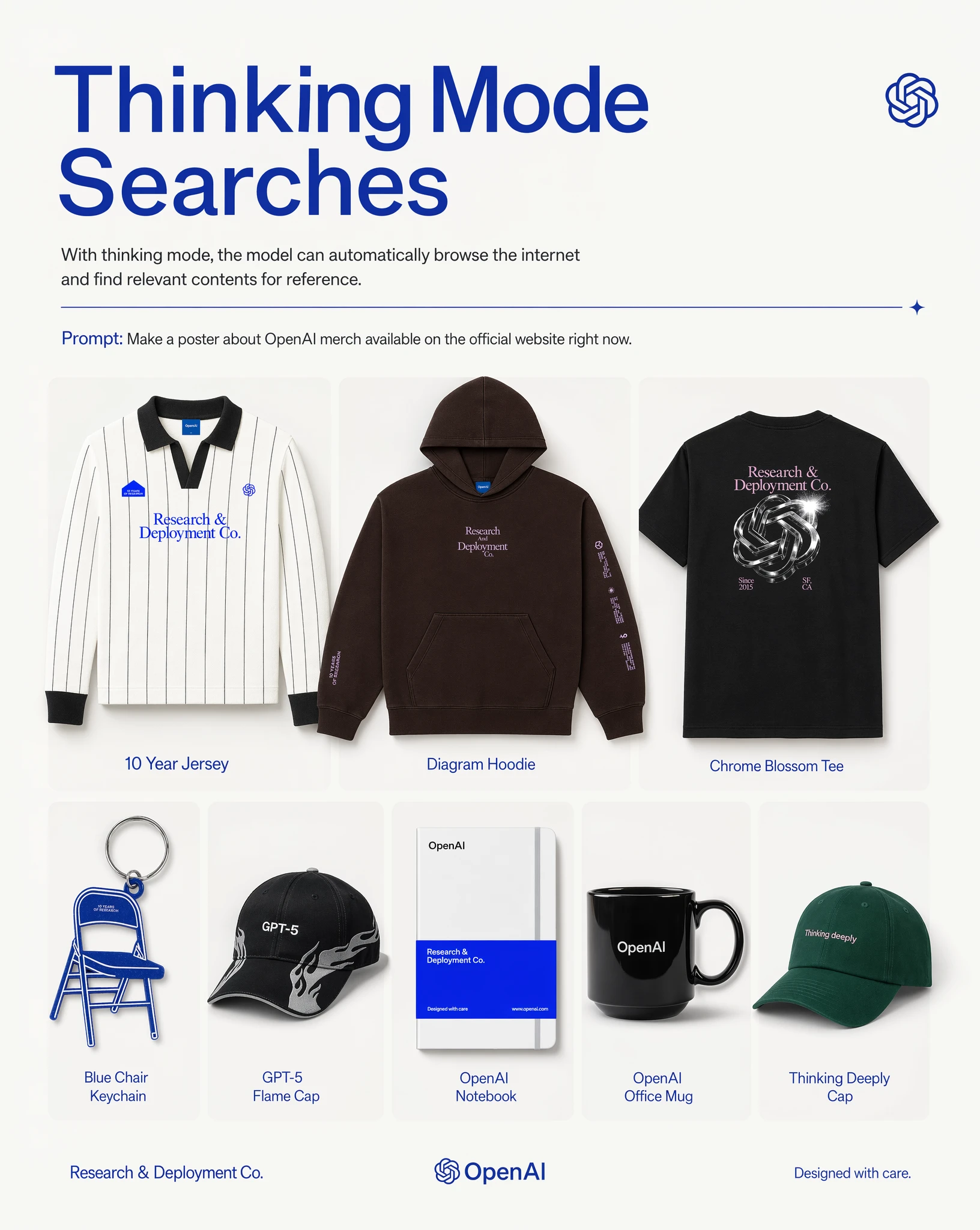

The model doesn't generate blindly. In Thinking mode, it:

- Analyzes the prompt in depth

- Fetches real-time context via web search when relevant

- Plans the layout and composition

- Generates the image

- Self-verifies the output and regenerates if needed

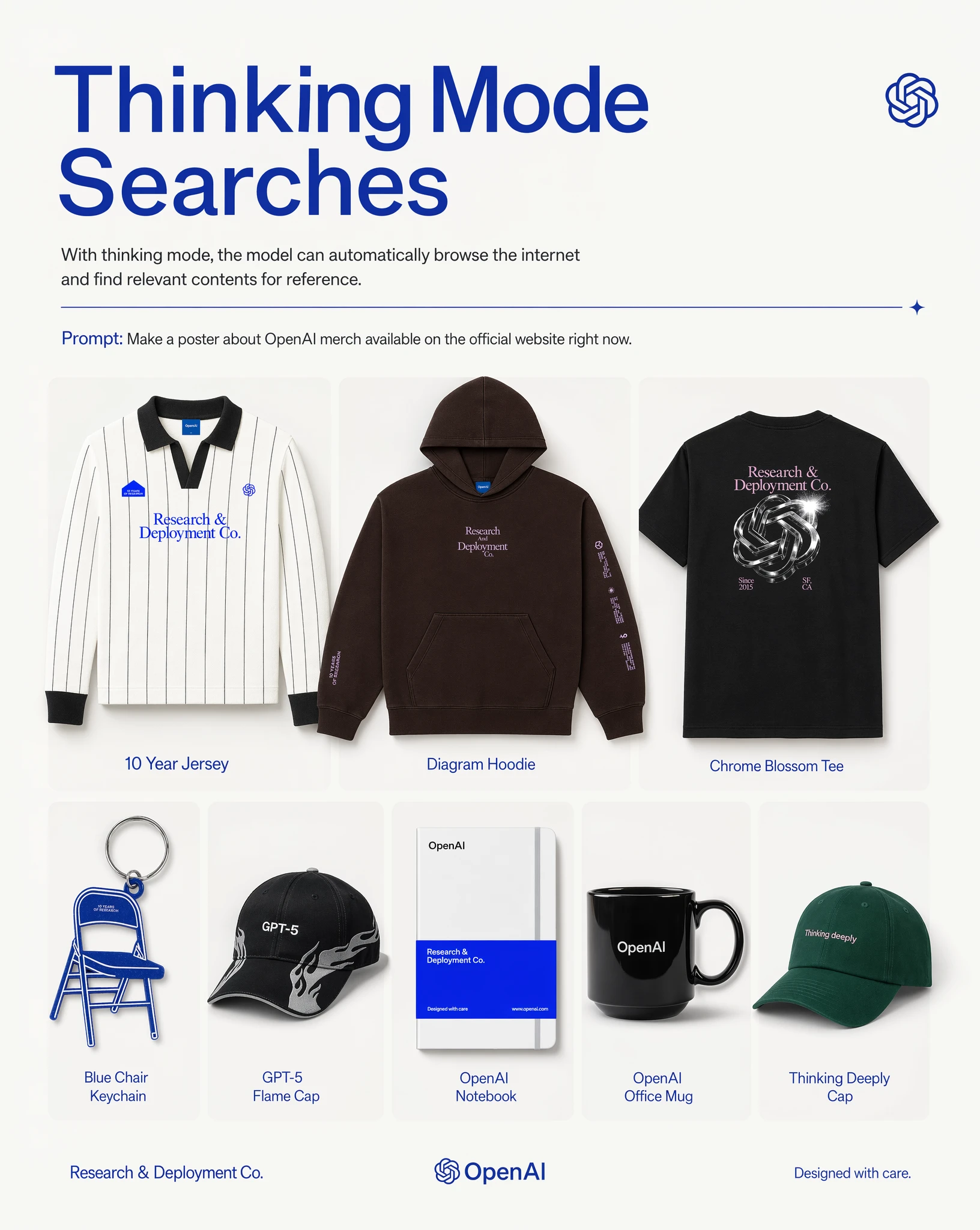

The example above shows Thinking mode's web search in action: given the prompt "make a poster of merch available on the OpenAI website right now," the model browsed the actual site and produced a poster with the correct, current products.

For complex scenes — dense layouts, multi-element compositions, text-heavy designs — this significantly increases first-attempt success rates.

Thinking vs. Standard: Same Prompt, Different Output

The two images below compare the same prompt rendered in Standard and Thinking modes. Prompt: a monkey riding a tiger in a desert, with an astronaut acting as a horse in the background.

Standard mode:

Thinking mode:

Thinking mode produces noticeably better lighting, shadow detail, and spatial coherence across the scene.

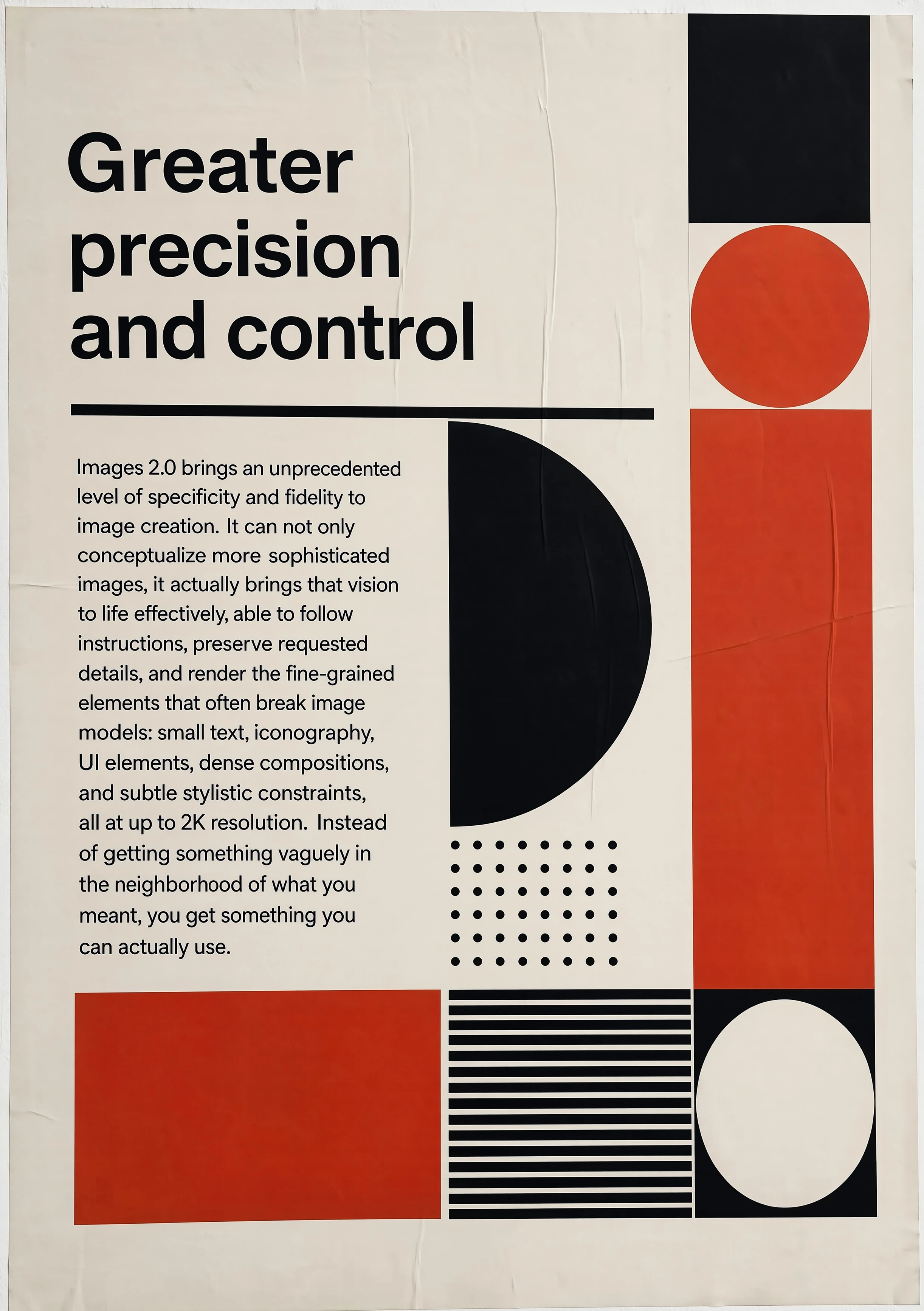

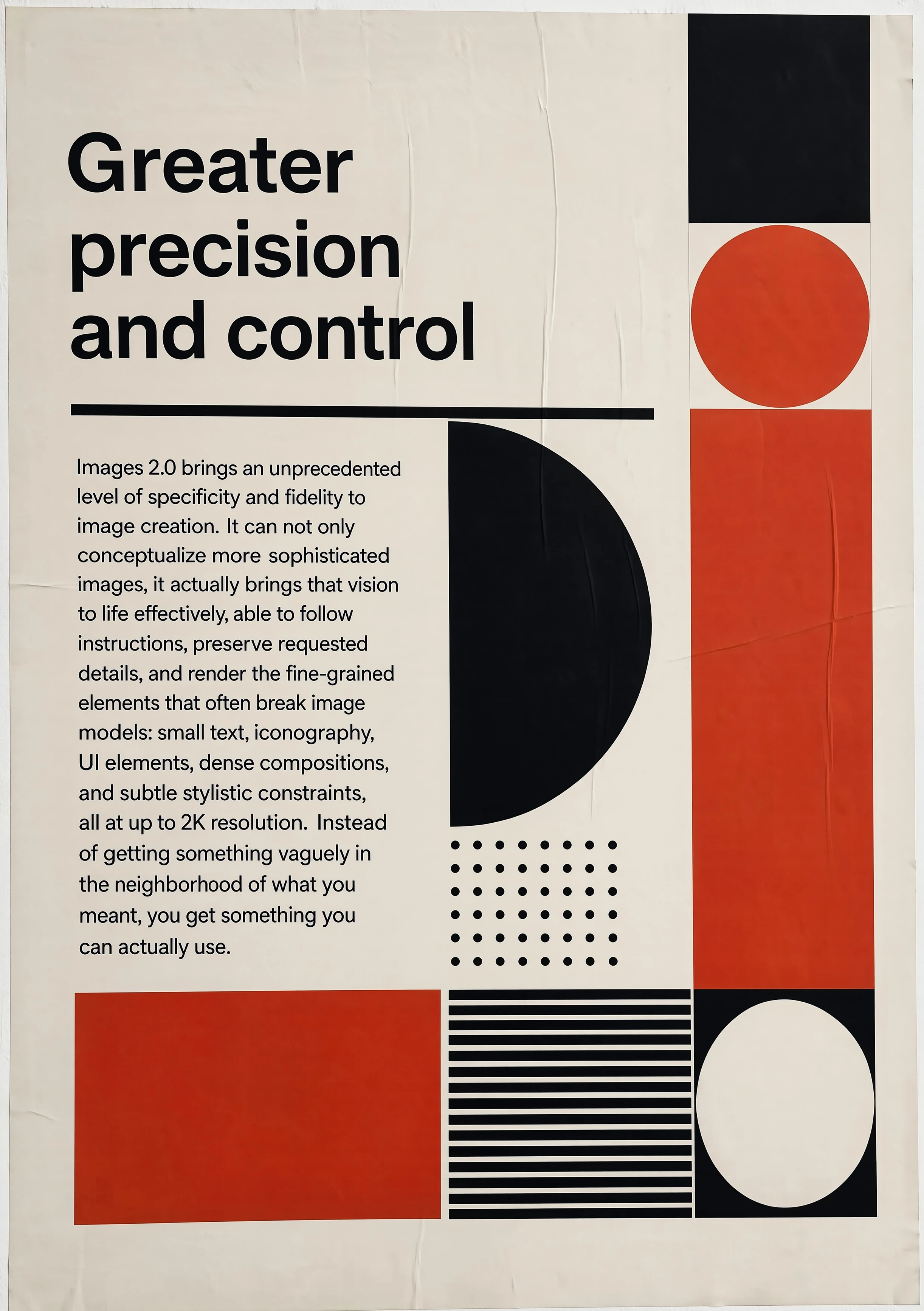

Greater Precision and Control

Images 2.0 doesn't just improve aesthetics — it improves instruction-following fidelity. Small text, iconography, UI elements, and dense compositions now render consistently at up to 2K resolution.

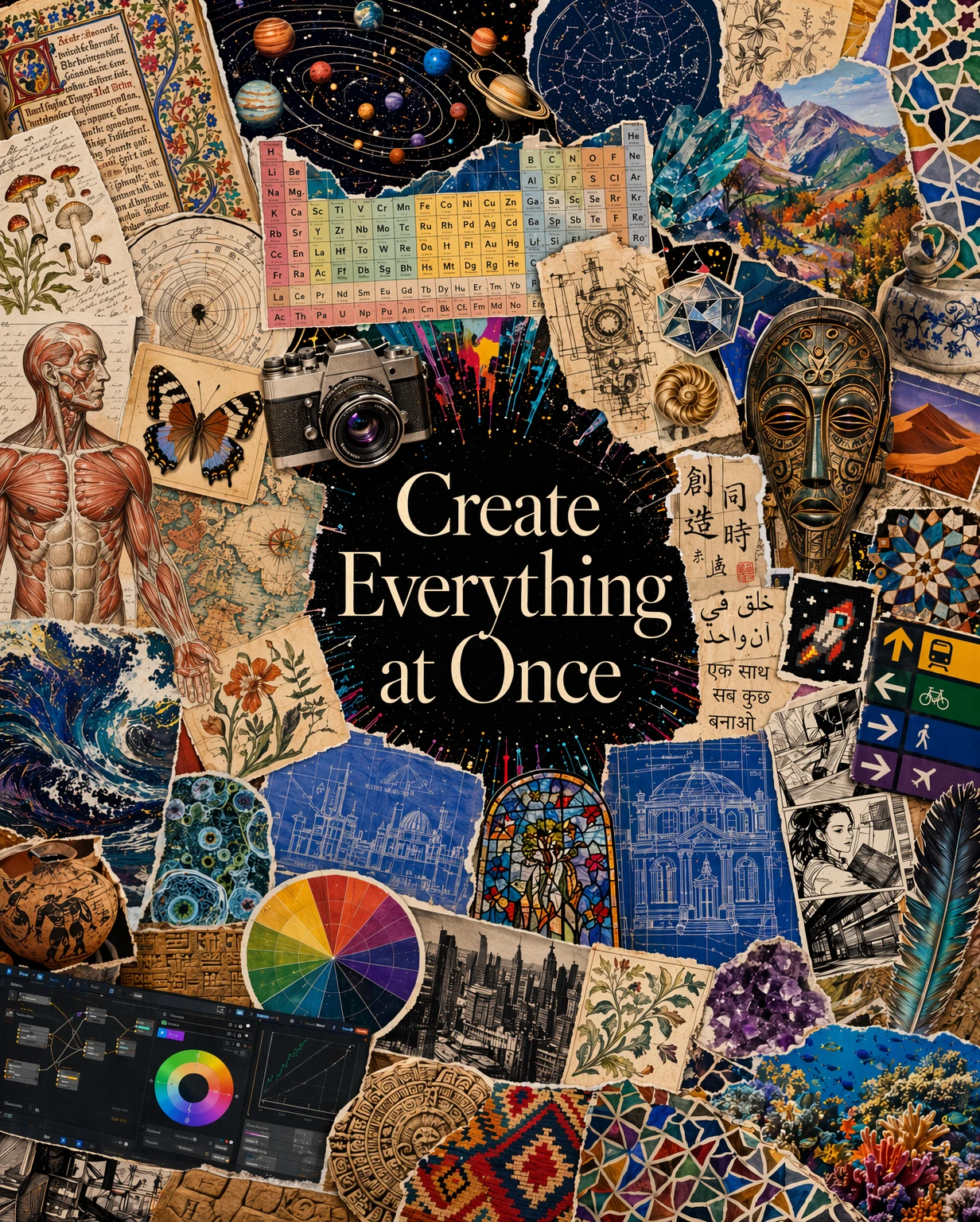

Text Rendering That Actually Works

AI image generation has historically mangled text. Images 2.0 addresses this directly. Supported scripts include:

- Latin alphabets

- Japanese, Korean, Chinese (Simplified and Traditional)

- Hindi, Bengali

- Arabic

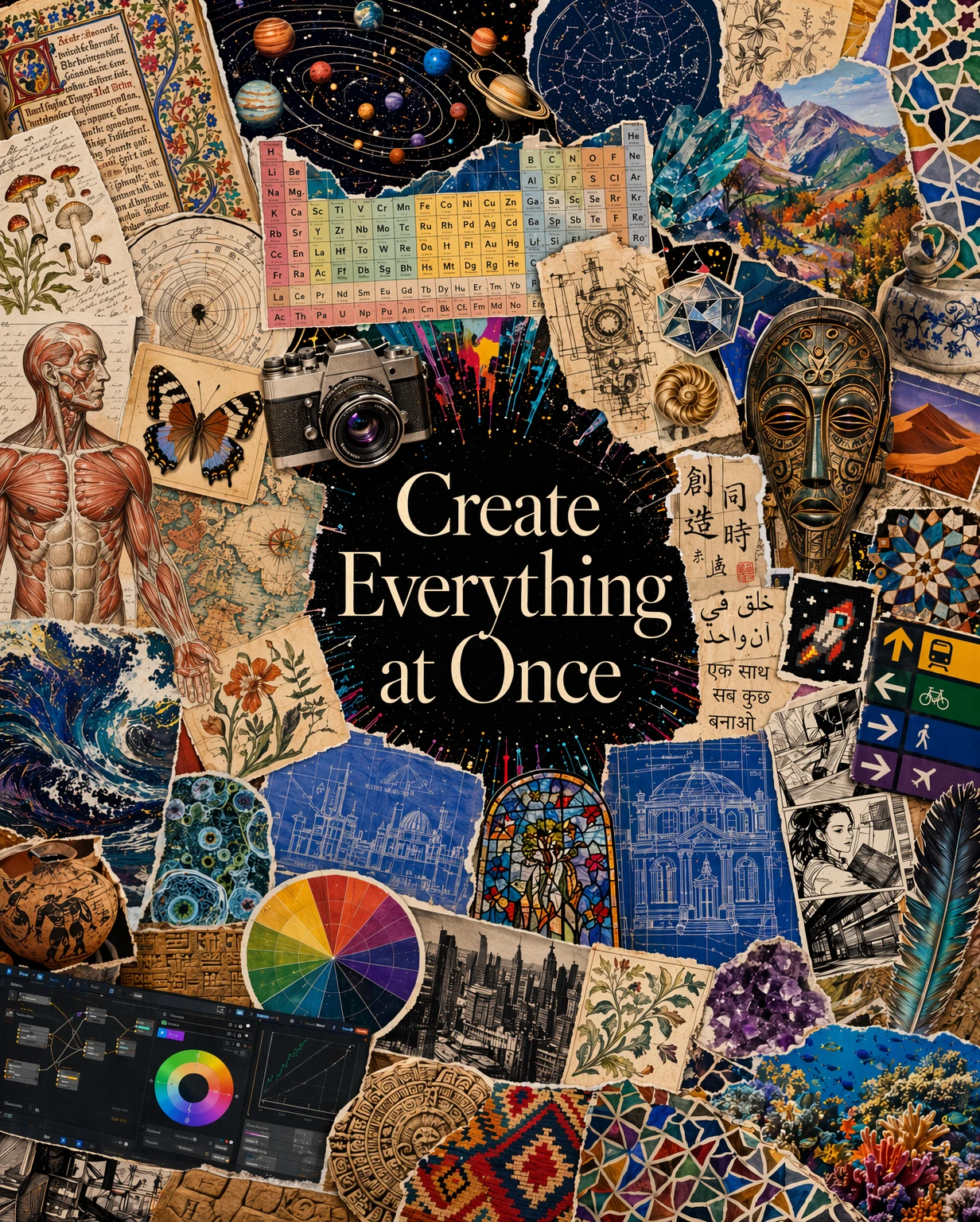

The example above shows the model rendering accurate text across Chinese, Japanese, Arabic, Spanish, Russian, and more — all within a single manga-style image. For designers working on localized content, this changes what's achievable in a single generation pass.

Up to 8 Coherent Images Per Prompt

In Thinking mode, a single prompt can produce up to eight images simultaneously. The critical distinction from past multi-generation approaches: characters, objects, and styles remain consistent across the entire set.

Use cases this enables:

- Campaign asset sets across formats (Instagram, Twitter, LinkedIn) in one call

- Visual storytelling with character continuity

- Product photography variants — same product, multiple angles

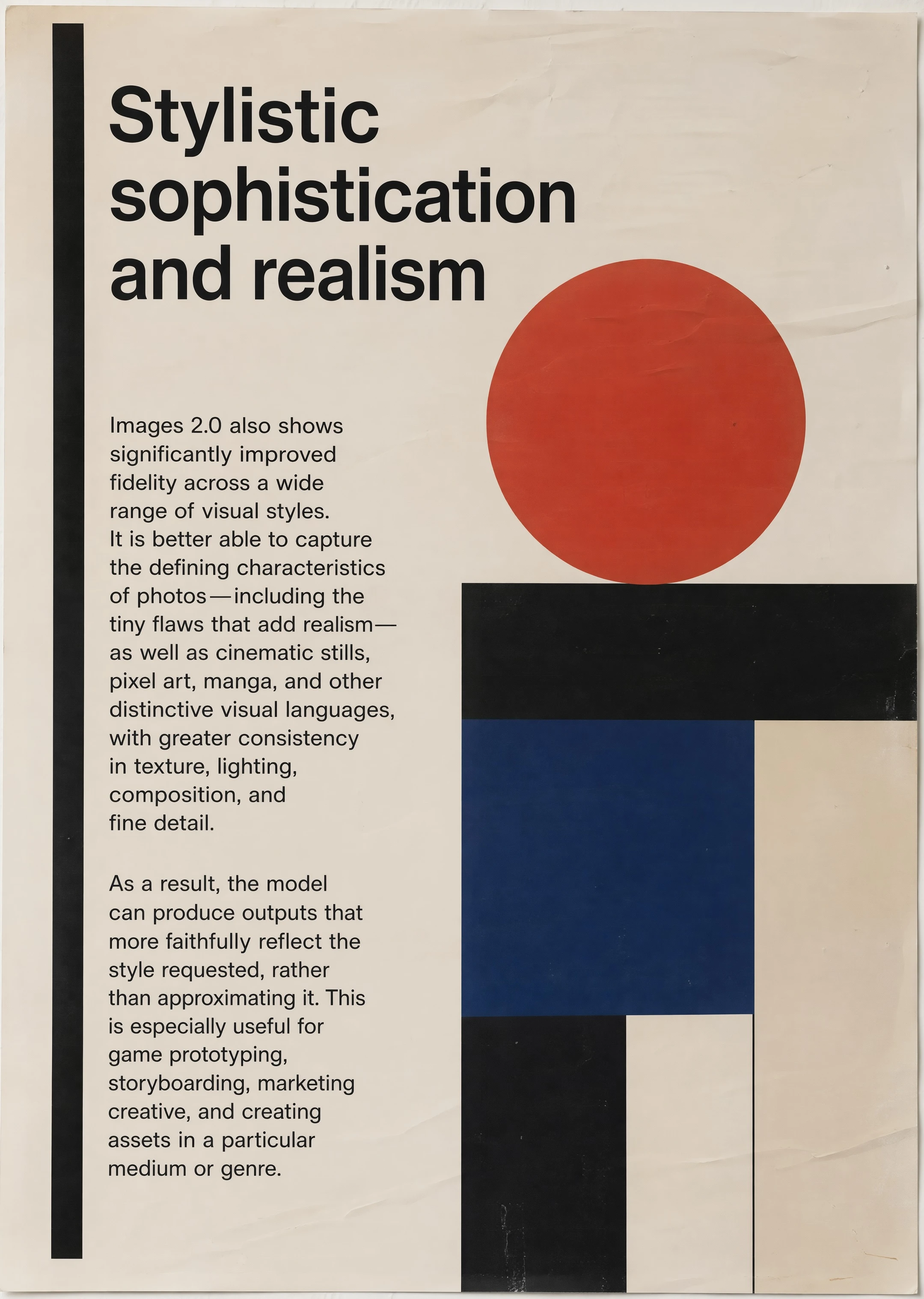

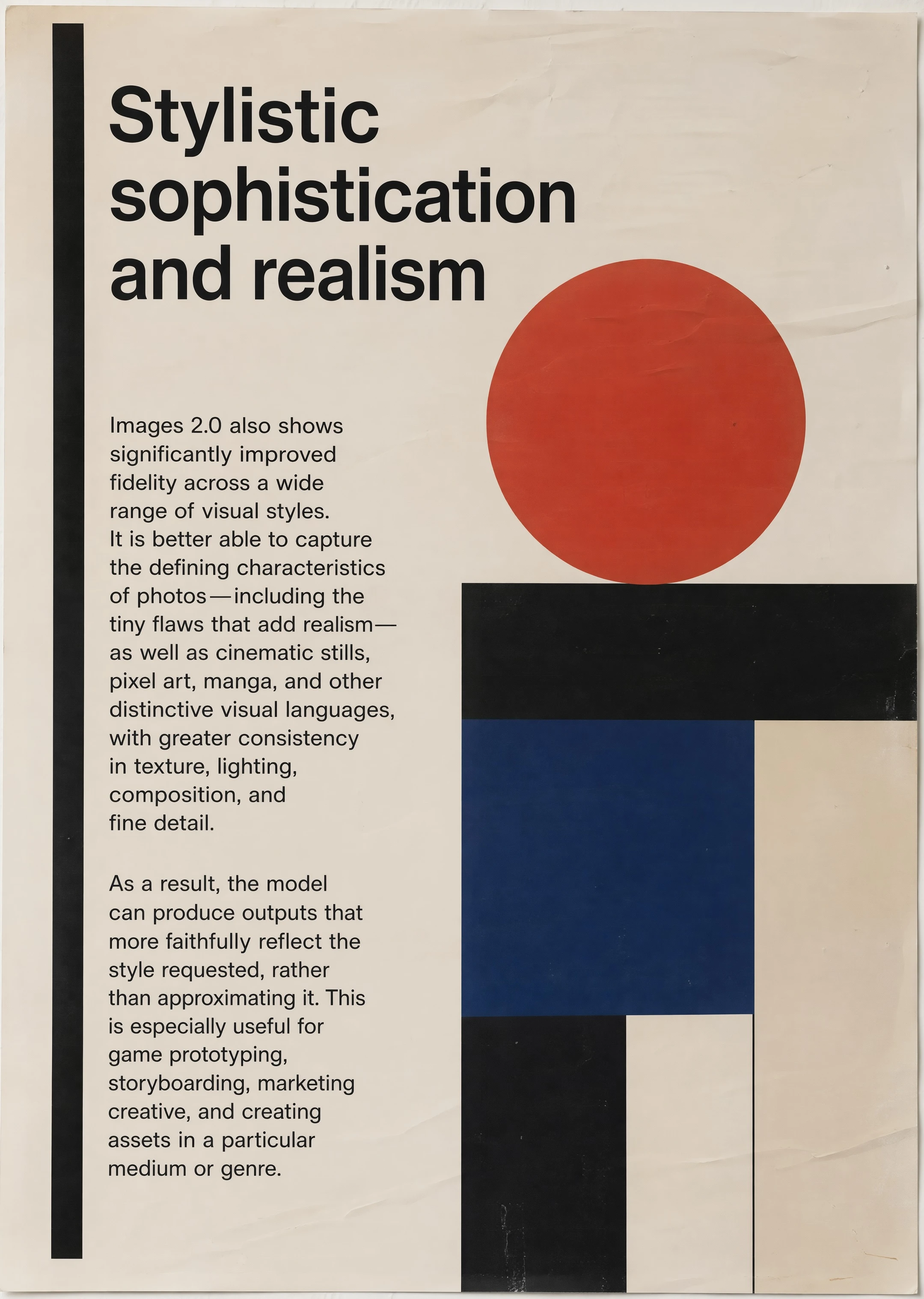

Stylistic Sophistication and Realism

Images 2.0 improves fidelity across a wider range of visual styles: photorealism, pixel art, manga, cinematic stills, and more — with better consistency in texture, lighting, composition, and fine detail.

2K Resolution

Experimental 2K support (up to 2560×1440) is available. This is a significant step beyond the standard 1024×1024. Supported aspect ratios range from 3:1 (ultra-wide) to 1:3 (ultra-tall), covering print, web, and social requirements.

Pricing and Access

ChatGPT Users

| Mode | Access | Capabilities |

|---|

| Instant | All plans including free | Core quality improvements |

| Thinking | Plus, Pro, Business, Enterprise | Web search, multi-image, output verification |

API Pricing (gpt-image-2)

Per-image cost at 1024×1024:

| Quality | Cost per Image |

|---|

| Low | ~$0.006 |

| Medium | ~$0.053 |

| High | ~$0.211 |

Token-based billing:

| Token Type | Price per 1M Tokens |

|---|

| Image Input | $8.00 |

| Image Input (Cached) | $2.00 |

| Image Output | $30.00 |

| Text Input | $5.00 |

Practical benchmark: 1,000 high-quality product images costs approximately $211.

Codex Integration

For ChatGPT and Codex subscribers, gpt-image-2 is accessible directly in the developer workspace without separate API configuration.

API Usage

from openai import OpenAI

client = OpenAI()

result = client.images.generate(

model="gpt-image-2",

prompt="Professional product shot, white background, studio lighting",

size="1024x1024",

quality="high",

n=1,

)

image_url = result.data[0].url

Supported parameters:

size: Any resolution from 256×256 to 2560×1440 (experimental)quality: "low", "medium", or "high"n: 1–8 imagesoutput_format: "png", "jpeg", or "webp"

For conversational image editing workflows, the Responses API supports multi-turn image editing — generate, refine, and iterate within a single session.

How It Compares to DALL-E 3

| Feature | DALL-E 3 | gpt-image-2 |

|---|

| Reasoning | None | Native (Thinking mode) |

| Text rendering | Poor | Multilingual support |

| Max resolution | 1024×1024 | 2560×1440 (experimental) |

| Multi-image consistency | None | Up to 8 images |

| Web search | None | Thinking mode only |

| API model name | dall-e-3 | gpt-image-2 |

| Retirement date | May 12, 2026 | Active |

If you have existing DALL-E 3 integrations, update the model parameter and review your prompts before May 12.

Competitive Positioning

vs. Nano Banana 2: Nano Banana is cheaper (~$0.02/image) and faster (1–3 seconds). gpt-image-2 is superior for text-heavy designs and complex multi-element layouts.

vs. Midjourney v8: Midjourney retains the edge on aesthetic composition for editorial and artistic work. gpt-image-2 leads on text accuracy, API availability, developer integration, and multi-image consistency.

Limitations

Honest assessment of current gaps:

- Knowledge cutoff: December 2025 — visuals tied to post-2025 events or products will be unreliable

- Logo accuracy is inconsistent — exact brand logo reproduction still requires human review

- Thinking mode latency — 15–30 second response times; not suitable for real-time applications

- No architecture disclosure — OpenAI hasn't specified diffusion vs. autoregressive, limiting optimization planning for API integrations

Migration from DALL-E 3

If you're currently using DALL-E 3, the migration is straightforward:

# Before

result = client.images.generate(

model="dall-e-3",

prompt="...",

)

# After

result = client.images.generate(

model="gpt-image-2",

prompt="...",

quality="high", # new parameter

)

Test your prompts before the May 12 deadline. Some prompt patterns that worked well with DALL-E 3 may need adjustment — gpt-image-2's reasoning layer interprets nuance differently.

Bottom Line

ChatGPT Images 2.0 makes a strong case for being the most capable commercially available image model right now. The reasoning integration solves real problems — complex scenes, accurate text, multi-image consistency — rather than adding features for demo purposes.

For developers on DALL-E 3: the May 12 deadline removes the "wait and see" option. For designers and content teams: the text rendering improvements alone make this worth testing immediately.

Access via ChatGPT: chatgpt.com — Thinking mode requires Plus or higher.

API access: Model name gpt-image-2, available through the standard OpenAI SDK.